Recently we ran another Feed workshop in the Natimuk Hall inviting a group of local actors to interact with the animated scenes I’d been developing over the past few months.

The main plan was to see how performers actually meshed with the projections Id been developing both in terms of how they could engage with the projection and how well the projections would respond to them. A lot of things you can imagine how they might work when you’re looking at them on the computer screen but until you actually see them on a stage with real people its hard to be sure. Often live the effect is very different from the screen effect, particularly the speed things move at, things that seem to drift around the monitor in a leisurely manner rip across the scrim at lightning speed, confusing the performers and inducing seizures in the audience. Luckily a lot of the worst of this was became obvious during the Horsham workshops late last year so I was a lot more mindful of it going in to it this time.

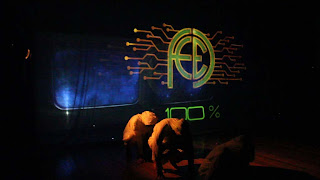

One of the key elements of the play is the feed itself, a cloud of data that tracks each performer. Full of contextually relevant info, helpful pointers and targeted advertising. Characters can review new images from the feed, send images to one another share them with a group or communicate via text. All while moving around on the stage.

The script requires that there be as many as seven performers on stage doing this at anyone time and keeping track of it all and keeping it even even vaguely reliable has been the biggest challenge of the project so far using a range of techniques, video tracking, using the kinect, manual tracking with the iPad via touchOSC. Each method has its strengths and weaknesses and likely the ulimte solution will feature some kind of combination of the techniques. Manual tracking works really well up to about 4 objects at which point everything goes to pot and the operators head explodes. The kinect is awesome (the depth tracking and for not requiring visible light) but has some annoying limitations. It is limited to roughly a 6-meter area and it cant see through the scrim we need to have hanging across the stage so it can only track people in front of that. Visible light tracking allows for a greater range, sees right through that scrim but it lacks the depth perception of the kinect and is sensitive to visible light and therefore and gets confused by the projections. The biggest problem with all the automated tracking is that the computer tends to get confused when performers cross paths or bump into each other (that’s where human operators really come into their own). Something I havent tried yet but would like to is planting some kind of wi-fi tracker on each performer allowing them to be tracked and uniquely identified anywhere in the room. From what I’ve read, there can be issues with accuracy and lag, but if it could be got working it ought to be perfect. Anyone with any experience of this, PLEASE get in touch.

And thanks again to Regional Arts Victoria who’s support allowed this recent stage of the development to happen.It feels like we’ve made massive headway over the last few months and the whole project is creeping steadily closer to being a reality.

These are all stills from the video we shot (thanks Gareth Llewellin). I’ll be uploading that once Ive had a chance to edit something together but likely there’ll be a bunch more stills first focusing on some of the scenes we worked through.